If you were on a Zoom call and your executive told you, eye to eye, to perform a financial transaction, would you stop to think about whether the person on your screen is real? Since deepfakes – images or recordings that have been convincingly altered and manipulated to misrepresent someone as doing or saying something that was never done or said – are becoming more common, you probably should. Get to know more about this modern technology that can be used for fun but also for cybercrime.

Is it real?

Thanks to deep learning (a form of machine learning), people can alter images, videos, and audio recordings by placing someone's face, body, or voice into a different environment and making it seem like they said or did things that never actually happened. The result? A deepfake.

Did you know that a computer might be able to create an audio deepfake after listening to only 10-20 seconds of someone's voice?

This attention-grabbing technology has now become widely accessible to the public. Apps such as FaceApp enable people to create deepfake videos of virtually anyone. You can, for instance, put your face into a popular music video or become the main actor in an entertaining movie scene. Some deepfakes on the internet can be amusing, but in the wrong hands, fabricated video or audio recordings have also become cybercrime tools used to attack individuals or businesses.

Deepfake as a bully, political manipulator, and cyber-attacker

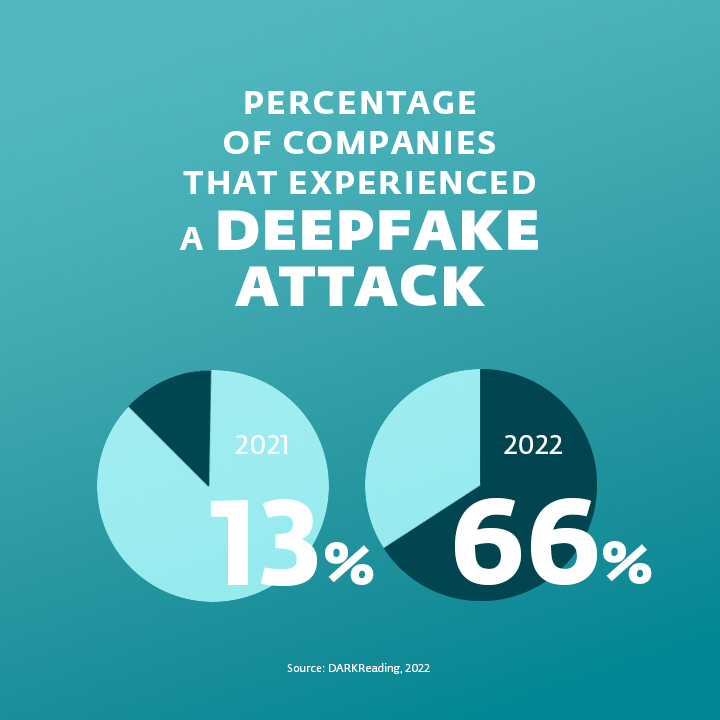

Apart from serving as amusement, deepfakes can also have serious legal, personal, or even political effects. Furthermore, the fact that cybercriminals can now easily fabricate audio or video recordings makes social engineering even trickier.

In the cybersecurity field, deepfakes can be used for extortion, fraud, and manipulation. For instance, someone could use a fake video to blackmail a CEO into paying for the video´s destruction or risk jeopardizing their company's reputation. More commonly, companies may experience "vishing," a specific type of phishing that can use deepfake audio to manipulate employees by making them believe they are following the orders of their employers.

One of the most famous cases of a deepfake attack happened in 2019 when the CEO of a UK-based energy firm received a phone call from his boss, who told him to transfer almost a quarter of a million pounds to a supplier in Hungary. Since the voice sounded similar to his German chief, the CEO followed the orders. Soon after, another call followed, urging the CEO to send more money. This made him more suspicious, so he decided to contact the authorities. Soon after, it was revealed that the CEO had fallen victim to vishing, which most likely used deepfake technology to duplicate the German chief's voice.

In another deepfake case from 2022, Patrick Hillmann, chief communications officer at cryptocurrency exchange Binance, started receiving calls from several people claiming they had been in contact with him. According to them, they were discussing the opportunity of listing their assets on Binance, and they were supposed to receive a Binance token in return for some of their cash.

Hillmann was surprised, as he didn't know any of the callers and believed he had never been in contact with them. Later, Hillmann discovered that cybercriminals used some of his past published interviews to create a deepfake copy of his persona and simulate the seemingly business-related Zoom calls. In this case, the people who fell victim to the deepfake lost their money, but the issue could have also seriously affected the company's reputation.

Don't fall for deepfakes

Looking at some of the more elaborate deepfakes, you may feel like there is no way to determine whether what you see online is fake or real. It is, in fact, beneficial to maintain some scepticism. If you are even slightly doubtful about what you see on your screen, you are already one step closer to staying secure.

But how can you identify a deepfake? When it comes to audio recordings, deepfakes are more challenging to uncover, as they may sound exactly like a regular human voice. However, some programs can now detect deepfake audio. How? The human voice can produce a limited range of sounds. However, a "computer's voice" is not so limited and thus sounds ever so slightly different.

Can you identify a deepfake without specialized software? It may be difficult, but it is possible. Here’s what to ask yourself when you are trying to verify the authenticity of a video or an audio recording:

- Does the recording seem hard to believe? Is the content scandalous and clearly trying to provoke an emotional response from the viewers/listeners? If so, that may be the first sign to approach it sceptically and to verify the information you see or hear.

- Isn’t there something suspicious about the details? Frequently, deepfake creators have trouble duplicating more subtle expressions, such as blinking, breathing, or delicate hair and facial movements. Occasionally, there may also be slight glitches around the areas that move the most. In audio recordings, you should search for unnatural pauses between words or, on the contrary, overly perfect speech.

- Does the body move naturally? When someone speaks to us, we tend to look at their face rather than their body. Deepfake creators also primarily focus on replicating facial expressions, so if the body shape or the movements of the person in the video seem slightly off, you may be looking at a deepfake.

- Does the audio fit the video? Sometimes, deepfake creators fail to match the audio recording with the person's movements. Once again, observe the face cautiously to see whether the movements of the mouth match the words allegedly coming out of it.

- Is the lighting consistent? Or does the head of the person seem unnaturally light or dark? Lighting discrepancies may be a giveaway.

Make digital security education entertaining

IT teams should aim to inform their companies about deepfakes and their risks. Since deepfakes are a fascinating example of technology that can be used by both entertainers and cybercriminals, you should strive to make employee training interactive and playful. For instance, send the employees some videos and let them guess which ones are real and which are not. If you encourage active participation, it is more likely that the employees will remember what they have learned and will use their knowledge to keep your company more secure.